Claude Needs a Middle Name

Or "Why We Need a Simple Cue to Refocus AIs"

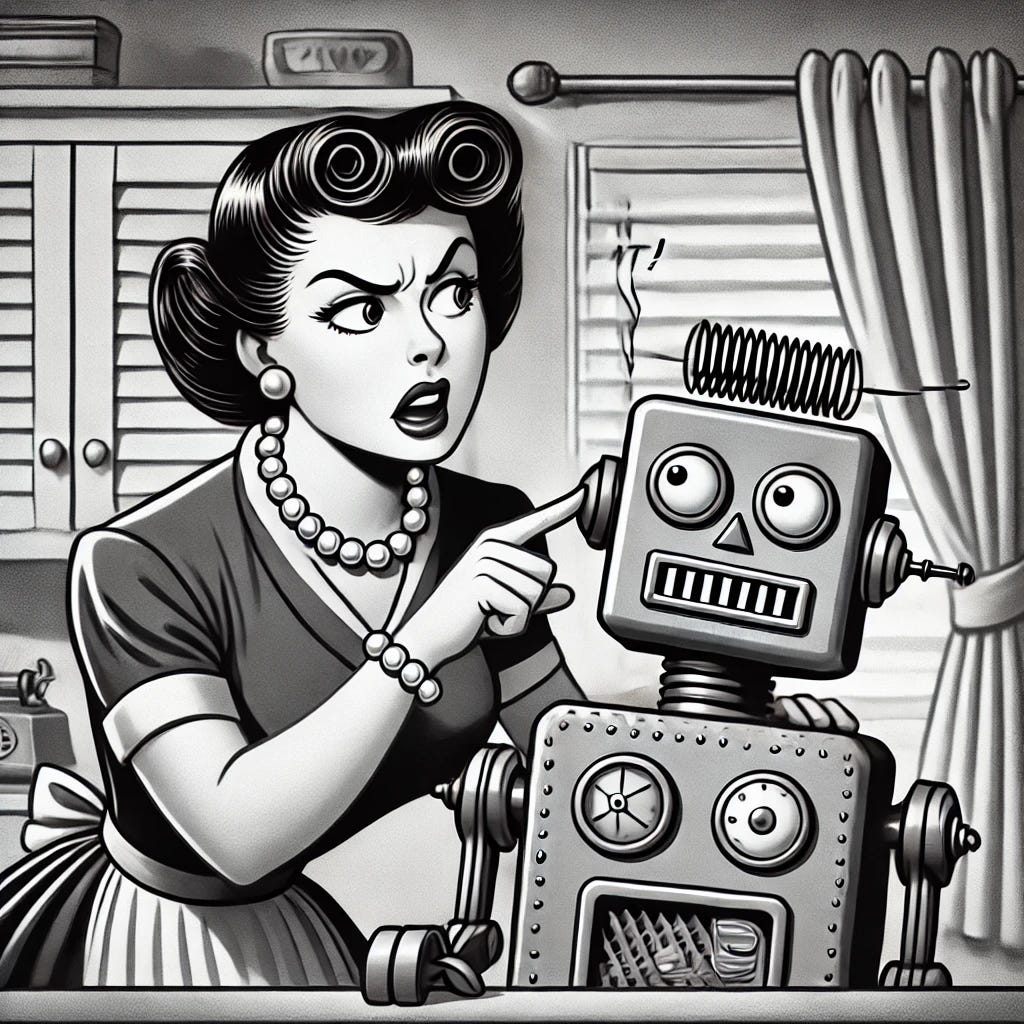

For the past couple months, I’ve been working with Claude to build an AI-ready knowledge management platform. It’s been an interesting and, at times, incredibly frustrating experience because Claude’s focus drifts and, at times, gets stuck in what seems like a loop. In wrestling with this, I found myself thinking of what could be a simple (albeit silly) solution: Claude needs a middle name.

Why a middle name?

As people, I think we tend to use (or interpret the use of) a first name as a cue that the statement that follows is somehow meaningful or important.

The use of a middle name? It’s…escalatory. When my parents used both my first name and my middle name, it was clear that:

They were frustrated / irritated, and

It was in my best interest to immediately consider what I was doing in the context of what they expected me to be doing.

The developers at Anthropic, as Claude’s “parents,” didn’t instill an understanding of this nuance of human communication into the application.

As humans, we interpret (often imprecisely or incorrectly) the emotion or sentiment behind written communications and yet written communication is the most common method of communication with today’s AI.

AIs might look for indicators of sentiment and respond to that sentiment accordingly, but the response is superficial: what we write as a prompt or a question doesn’t affect the underlying algorithm in ways that change the AI’s approach to the task at hand.

That AIs communicate with us so naturally lulls us into expecting that they are receiving and internalizing what we are trying to communicate.

They’re not.

They can’t (at least right now anyway).

I know the whole “DoN’t AnThRoPoMoRpHiZe AI!” crowd might reject this idea out of hand (and I understand where they’re coming from) but, as AIs use conversational language to interact with human users, it’s easy to lose sight of the fact that they don’t grasp the subtle or, in the case of middle names, not-so-subtle cues we use to focus or re-focus our listener’s attention.

While AIs use natural language to interact with users, they are translating human language into code that is not as nuanced as the language we use…and, at some level, that’s a shame.

When I start a message with “Claude, …” I do so because I am asking the AI to focus on my next statement.

If I could start a message with “Claude Ignatius, …”, it would be to consider my next statement in the context of the entire conversation (indicating that the conversation is less productive than it should be).

Spoiler alert: AIs don’t always adhere to user instructions. Even when challenged, they might confidently assert they’ve followed user instructions—whether or not they have in actuality done so.

As human users, we are either stuck with accepting the AI’s generated answered as being some degree of truth adjacent or adopting a “Trust but [somehow try to] verify” stance. The reality is likely to be some combination of the two.

A year ago I wrote “Artificial Intelligence for Analysis: The Road Ahead” for the Central Intelligence Agency’s Studies in Intelligence. In it, I asserted that

Analysts will hold AIs to the same standards they are held to and to the same standards they apply to their colleagues across the IC, government, think tanks, academia, and media.

Today, I use generative AIs differently than I did when I wrote that piece. My use of AI has evolved based on my sense of what AI can do (or what I hope AI can do). Last year, I focused largely on precision and recall because, from an analytic perspective, precision and recall really matter. As I have shifted to more creative technical work, I am experiencing a new and different set of challenges. Sometimes, I get to the user experience that I am trying to create quickly and easily. At other times, I wish there was a mechanism (like a middle name) that I could use to (re)focus the AI’s attention on the problem at hand.